Human Facial Recognition

Humans are born with a capacity for facial recognition that is shaped through repeated exposure. As infants, we quickly learn to recognize the faces of those around us and come to regard them as safe and important to our wellbeing. Human brains have evolved to become rapid pattern-matching machines. Hardwired for survival, our brains have the ability to look at something or someone and decide in a split second if it is known or unknown, friend or foe. “This faculty to instantly detect, differentiate, classify, and match faces is essential,” says RealNetworks CTO Reza Rassool. “If a tiger approaches, you don’t want to spend a lot of time analyzing whether it will cuddle or kill you. Your body needs to go instantly into fight or flight mode in order to survive.”

Unfortunately, this same aptitude for snap judgement that sometimes protects us can cause problems for us as well. Although powerful, humans can typically only differentiate between 10,000 faces (compare that to our ability to distinguish between 1 trillion smells). Our pattern matching skills are only as strong as the information and experiences we use to train them. We often make decisions on poor information. All humans are biased to some extent, and bias is notoriously difficult to correct. Without exposure to a variety of unique faces, our facial recognition accuracy will skew in favor of those individuals close and familiar to us.

The vast spectrum of individual human faces on this planet, further diversified by our age, gender, and skin tone, makes facial recognition deeply complex and sets the stage for unconscious bias as we attempt to detect, differentiate, classify, and match individuals. Given this challenge, more and more modern research finds that humans can correct for bias through more exposure to a diverse array of faces.

Machine Learning and Facial Recognition

But what about facial recognition computer algorithms? Won’t they only be as accurate as the engineers who developed them? “No,” said Milko Boic, chief architect at RealNetworks. “Our facial recognition algorithm is built on machine learning, which is very different from traditional engineering approaches. In machine learning, we start with a model– a blank electronic ‘brain.’ Then we train the model with data. The brain learns like a human does—via trial and error. The engineers simply act as coaches to guide this process.” Boic is quick to point out that this type of machine learning allows the algorithm to exceed the capabilities of the original engineers who put the project together. “In a typical engineering project, say building software to do my taxes, the final product can only be as good as the engineers who build the software. It will never know more than the engineers that built it. But our facial recognition technology teaches itself—the engineers act as coaches. And just as a football coach doesn’t have to be the best player on the team, a machine learning model can outpace the engineers that built it. Our algorithm is far more capable of recognizing faces than me or any of my team.”

Facial Recognition Technology and Bias

Does this mean that all facial recognition technology is free from bias? Indeed not. The technology bias has been in the news recently as some algorithms have proven less effective at recognizing certain types of faces, and are more likely to conclude that faces that belong to different persons are in fact the same person. In particular, certain algorithms are more likely to determine that two different faces with dark skin are the same person. Since some facial recognition algorithms are used to match faces from video cameras with faces in criminal databases, this has sparked a great deal of debate. “It is not safe to assume anything is free from bias,” said Rassool. “The model is only as good as the data we feed it. That’s why it’s always important to test, measure, and reduce bias.”

The RealNetworks Approach

RealNetworks uses a layered strategy to significantly reduce the bias in our facial recognition algorithm. First, we built our algorithm on machine learning. This ensures that the product is not limited by the experience of the developers, who themselves may have a bias. Second, we leverage a diverse data set to ensure the model has enough exposure to different skin tones and facial features. This combined approach enables us to develop an algorithm that significantly reduces bias with respect to gender and skin tone.

RealNetworks Facial Recognition Tested by NIST

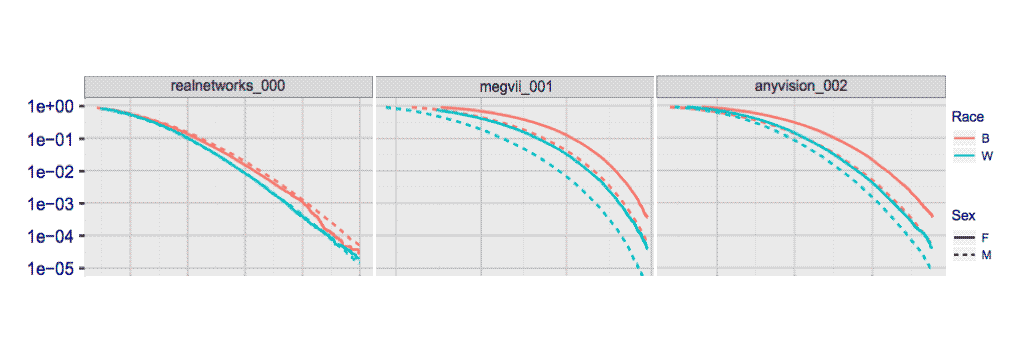

The facial recognition algorithm developed by RealNetworks recently underwent rigorous testing by NIST—The National Institute of Standards and Technology. NIST compared the RealNetworks facial recognition algorithm against other algorithms, specifically testing for signs of bias. RealNetworks was solidly among the top 5 algorithms to perform consistently across black and white skin tones. Additionally, RealNetworks’ technology shows less bias with respect to gender and skin tone compared to two market leaders. Bias would be indicated by a spread over the following curves measuring False Match Rate across the range of threshold adjustments provided by each algorithm.

Figure 1 NIST bias measurement. Separate curves appear for white females, black females, black males and white males.

In particular, the RealNetworks algorithm measured least likely to return a false match based on any specific facial feature or face type. “The test showing if false matches are concentrated around specific faces is represented as a curve,” said Boic. “The flatter the curve, the more uniform the performance across the spectrum of faces. The curve for our algorithm was the flattest among the 81 algorithms tested.”

All the algorithms tested were more likely to report a false negative (concluding that two faces are different people when they are actually the same person) for women than for men. “We don’t really know why this is,” said Boic. “We can speculate, and continue to provide data to better the machine learning, but that’s all at this point.” According to NIST, RealNetworks’ algorithm ranked fourth for consistency in recognition results across genders.

Why did the RealNetworks algorithm do so well? “We were very careful to feed the algorithm well balanced data,” said Boic. “If you only have a few examples of a particular facial type, the algorithm will exaggerate the importance of the facial features of that type. So, if among a large group of faces, you only include four that have moustaches, the algorithm is much more likely to determine that any person with a moustache is the same individual. If you feed the algorithm thousands of faces with moustaches, it will determine that moustaches aren’t so important for recognizing faces, and will use other techniques to determine a match.” Reza Rassool agreed, “We provided the algorithm a large, diverse data pool of faces from all over the world. Also, we deliberately chose to never ask our algorithm to determine the race of the person based on their facial geometry. This practice was scientifically debunked centuries ago. There’s no point in teaching our algorithm to classify people in a manner akin to a Victorian study of phrenology.”

The RealNetworks algorithm performed slightly less proficiently in other areas, for example, among very young children—especially aged 0-4. “Our algorithm is very strong, but this is an area where we will be looking to make improvements,” said Boic. However, Boic was also quick to point out that conditions in a testing lab at NIST are not the same as those out in the world. “There are other issues that can affect the accuracy of facial recognition algorithms,” said Milko. “Lighting is especially important. Lower lighting tends to favor accuracy in lighter skin tones as they show up brighter and clearer and make less accurate conditions for dark skin tones. Conversely, extremely bright lighting tends to favor accuracy in darker skin tones which show up clearly, and creates less accurate conditions for lighter skin tones that are ‘washed out’ by the bright light.” Rassool points out the need for continuous vigilance. “There is always the potential for bias in this space,” said Reza. “We will keep working to make our algorithms deliver ever more consistent accuracy, and strive to identify and eradicate bias so that facial recognition works equally well for everyone in our society.”